The Complete Technical SEO Audit Checklist

Complete search optimization is just as much about good technical SEO as it is about content and user friendliness. In order to have your website perform optimally for search engine rankings you need to make sure that your technical SEO strategy is without flaws. A technical SEO checklist and audit can help.

Search engine algorithms from Google or Bing send crawlers to your site to analyze the HTML code, JavaScript, performance, structure and more. This means that a fully optimized website needs more than just keyword density and good content for successful SEO results. Many online marketers and business owners know that SEO can be important, but technical SEO auditing is complex and may be difficult for anyone without expertise.

A good way to determine what you need is to create a technical SEO audit checklist to determine your website’s health. We’ve created this complete technical SEO audit guide to help you work through some of the most important elements of modern search optimization. A checklist like this will help online business and eCommerce sites determine where they could be suffering.

Work through our audit guide below to be sure that your technical SEO is complete. We’ve designed our guide using nothing but free technical SEO tools, so that you can get a complete audit much more easily.

What is a Technical SEO Audit?

A technical SEO audit is a process that checks various technical parts of a website to make sure they are following best practices for search optimization. This means technical parts of your site that relate directly to ranking factors for search engines like Google or Bing. The audit checklist can be done with something as simple as an Excel file.

Many “technical” SEO elements involve HTML elements, proper meta tags, website code, JavaScript, and proper website extensions.

For a technical SEO checklist, it’s not necessary to check every single part of an SEO campaign. There are many factors for SEO that you should also check separately – including content for on-page SEO best practices, keyword strategy, content and more. But technical SEO is important because it means checking under the hood of your website for more complex issues that you may not know about.

It also means checking for technical issues that could be damaging user experience by causing your website to function poorly.

An audit checklist could mean going step-by-step through our guide and making note of any issues you find in an Excel doc, or in a word document. By tracking what issues you find, you will be able to set them aside and address each one accordingly, making sure that your website is best optimized for search engine performance.

Follow our audit checklist guide for each of these items:

- Make Sure Your Content is Visible

- Ensure Your Analytics/Tracking is Set Up

- Check Your Canonicalization

- Accessing Google Search Console

- Check for Manual Actions

- Check Your Mobile Friendliness

- Check for Coverage/Indexing Issues

- Scanning your Site for 404s

- Auditing Your Robots.txt File

- Using the Site Command

- Check that Your Sitemap is Visible

- Secure Protocols & Mixed Content

- Check for Suspicious Backlinks

- Security Issues

- Checking Schema Markup

Make Sure Your Content is Visible

Content may not be a “technical” SEO ranking factor, but it is a ranking factor. So it’s a good idea to make sure it’s visible to search engines. This makes it a good first step for your technical SEO site audit. Check your site’s homepage, category pages, and product pages to make sure that your content is not only visible to humans, but also visible to Googlebot (some CMSs handle these pages differently so your checklist should include each kind).

In general if your site renders normally, then you shouldn’t have anything to worry about – particularly if your content looks good in Chrome. Googlebot uses a version of the Chrome browser engine to render pages.

You can disable JavaScript in Chrome to see if any important elements of your page go missing. Unrenderable content, links, or nav-bar elements could mean that GoogleBot can’t see them – but not necessarily.

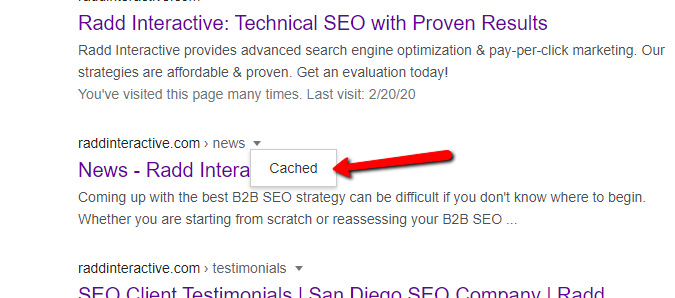

One way to check how GoogleBot perceives your pages when going through your technical SEO audit checklist is to check the cached version in the SERP. Use the “site:” search operator in Google to find your page in the index (e.g. “site:example.com/example-page-url” – but without quotation marks). Click on the small triangle next to the page’s URL/bread-crumb and click on “Cached.”

This will show you the cached version of the page that Google has in their index from the last time it was crawled. Don’t worry if it doesn’t show the most recent version of your page, it may be out of date if the page has not been indexed recently. For your technical SEO audit check to see if the cached version has important content elements missing, or if content that’s built with JavaScript is not being rendered properly.

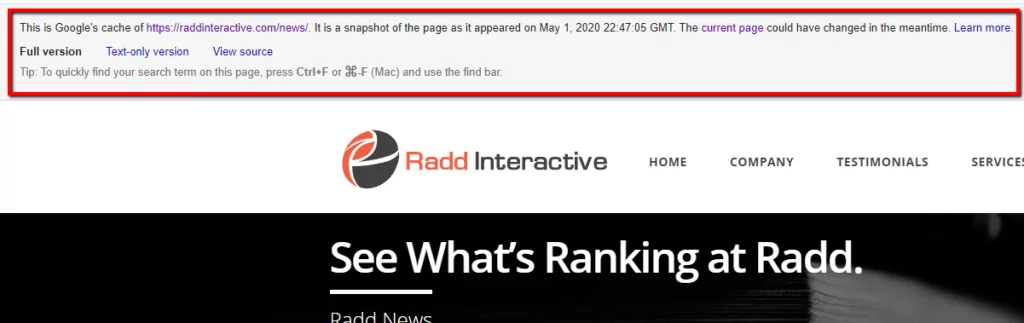

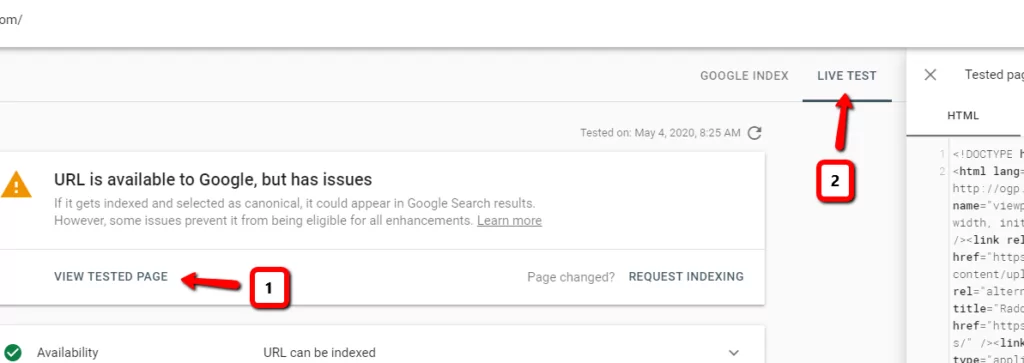

Another way to check how your page is being rendered by Google is to use reports available in Google’s Search Console. Put your page URL in to the “URL Inspection Tool” to see index status and any warnings about its performance. From here click on “Test Live URL,” then on “View Tested Page” to check the result and to make sure that your page is being rendered completely.

Ensure Your Analytics/Tracking is Set Up

If Google Analytics tracking is already set up for your site it’s prudent to make sure that it’s set up and working properly. Our technical SEO site audit guide can show you how.

Check your source code (press Control + u) of the home page, main category pages, and product pages for the presence of the tracking code associated with your Analytics account and property. Since some CMSs (content management systems) handle page templates differently, it’s a good idea to check each kind to make sure tracking is consistent.

The code should be installed in the head tags (between <head> and </head>) and usually includes an ID that you can match with your Analytics property/view.

Google’s Tag Assistant

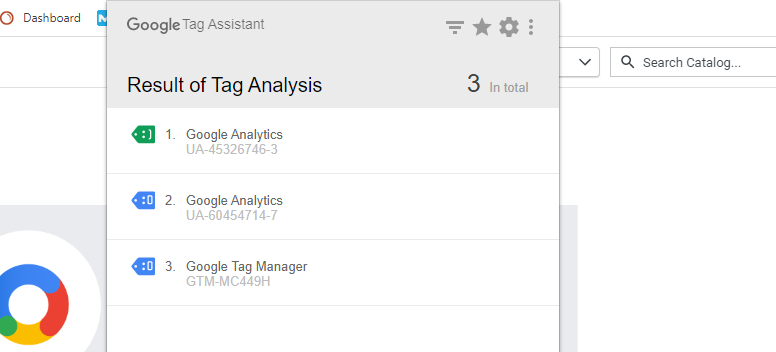

Google’s Tag Assistant browser plugin can help verify if the tracking code is installed correctly. If you have the plugin installed, click its icon while on the page you want to check and click “Enable.” Next reload the page and click the Tag Assistant icon again.

Once the page is loaded you will see information about any and all Google tags installed on the page. This free tool is provided by Google for marketers to make sure their tracking codes are installed correctly, and it’s easy to use.

If your tags show a blue or red icon then they may not be installed correctly and the next step of your website’s technical audit checklist can be fixing them. Tag Assistant can tell you what the issues are, and you can “Record” your browsing session to see how tags fire as you go from page to page.

Tag Assistant can help with your technical SEO audit by verifying your Analytics code, but it also helps with Google tracking tags designed for other marketing channels: Adwords Conversion Tracking, Remarketing, Floodlight, Google Tag Manager (GTM), and more.

Make note of any tags that are marked Blue or Red as these colors indicate problems with the tag’s installation.

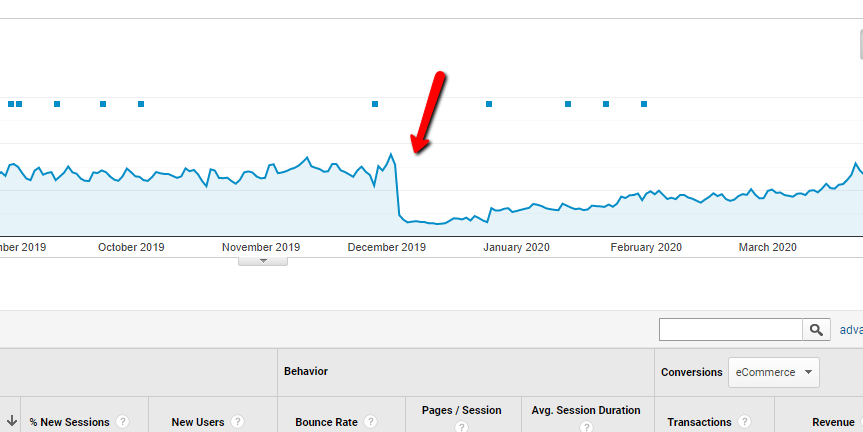

Analyze Google Analytics Traffic

Your Google Analytics account can also give you clues about tracking, if you see extreme shifts in data then these could be a red flag that suggests your tracking code is not firing properly when loaded. In your Google Analytics data, look for periods with no sessions, sudden shifts in traffic, and very high/low bounce rates – all of these can be symptoms of potential tracking code issues.

Proper tracking code installation is important for reliable data. If the tracking code is not installed properly it can make verifying traffic and sessions difficult and make it harder to determine which pages are most important and which one’s get the most traffic. It can also affect accurately determining the results of your SEO campaign.

Finding the “UA-” prefix is one way to verify, but it’s not 100% reliable due to how HTML may show the code. If you know what type of tracking code you have, look for its presence in your source code. It’s important to note though that this step of the technical SEO audit also only indicates the presence of a tracking code, and not necessarily if it is working properly.

Check Your Canonicalization

Check your site’s source code for rel=”canonical” href=”www.example.com/page.” Doing a page search for “canonical” in the page’s source code is a simple way to verify.

Check that the referenced URL is live.

Next check category pages as well as product pages on your site and make note of results.

Due to HTML formatting, the canonical tag may not always be found. In this case Google’s Lighthouse browser plugin can be used to verify canonicalization. With the plugin installed, select “Options” and make sure the “SEO” box is checked, then click “Generate Report.” Once the report is generated, verify that under SEO > Not Applicable > Content Best Practices the report reads: “Document has a valid rel=canonical.”

(The Lighthouse app is a free technical SEO audit checklist tool from Google, and can be used to check other technical parts of your site!)

Why include this step in your site’s technical SEO audit checklist? Canonicalization is a way of telling search engines which version of a page is the “master version.” Often one page can be visited using multiple URLs. Canonicalizing these pages to one main version allows you to inform search engines like Google and Bing that these URLs are indeed the same page and are not merely duplicates competing against each other. This can vastly improve your ability to be indexed and help search engines understand your site better, giving your technical SEO performance a boost.

When pages compete against each other in search engine indexes it means that not any particular URL may perform well, and/or that the wrong one may be performing well. As part of your complete technical SEO audit, our guidance is to make sure your site is canonicalized correctly.

Unique URLs that should feature canonicalization include pages like:

- https://www.example.com

- https://www.example.com

- https://example.com

- https://example.com/index.php

- https://example.com/index.php?r…

These URLs may all be for the same page, but if not canonicalized properly then Google may have a hard time determining which one to index.

Best practice is for product/category pages to be canonicalized to a generic URL or the shortest URL with the fewest sub-directories (e.g. www.example.com/collections/sub-directory/sub-directory2/xyzproduct canonicalized to www.example.com/xyzproduct).

You can also use the URL Inspection Tool or the “Coverage” report in Search Console to see which URLs are being indexed. With this information from your audit you can make sure only the correct pages are being indexed.

Accessing Google Search Console

Google’s Search Console is an indispensable tool for auditing your site’s SEO health and it’s available free to anyone who owns a website. Part of your technical SEO audit checklist should be ensuring you can access Search Console correctly.

Go to Google Search Console and ensure that you are logged in to the correct Gmail account for your site. If you already have a Search Console account you’ll find it here, or you can set one up.

In Search Console find the account property for your site. Verify that the domain and protocol for your site is in Search Console and that it matches the live version of the site. (e.g. https://example.com is not the same as https://www.example.com). An important step in your complete SEO audit is making sure your site’s property is available and that it’s the correct version. These properties are not treated the same and if you have the wrong one then the data won’t be accurate.

Search Console allows webmasters to check indexing status and optimize visibility of their site to Google. It also allows you to check for server errors, manual actions, messages, and more from Google that relate to your website’s SEO (our technical SEO audit guide will go over this more later on).

Make sure the protocol (“httpss:” vs. “https:”), subdomain (“www.”), and domain (“example.com”) all match with the live version of the site – this means the right Search Console property is available for the rest of the technical audit checklist.

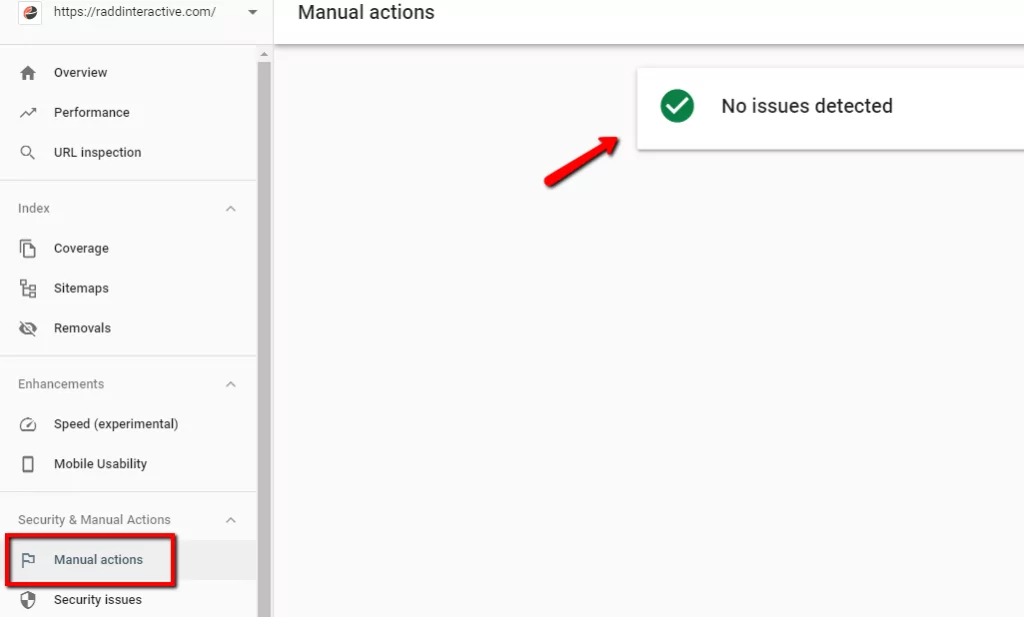

Check for Manual Actions

On the left side navigation panel of your Search Console, select Security & Manual Actions > Manual Actions. If you regularly go through a technical SEO audit checklist then you can always check for manual actions here.

Manual Actions against a site or page mean that a human reviewer at Google (not the algorithm) has determined it is not compliant with Google’s Webmaster Quality Guidelines and has penalized your site. Manual Actions are exceedingly rare but are critical and they mean the page can be demoted or even removed from Google’s index. This is an important step in your technical SEO audit checklist, if you haven’t checked for manual actions before then it’s possible your site can be seriously underperforming.

Usually manual actions only happen because of bad-faith attempts to manipulate the Google search index or to improve SEO through black-hat means.

If you find that your site has a manual action then your immediate next step should be to fix it. Fortunately Search Console will tell you what the penalty is for. As part of your technical website audit checklist you can also review the Google webmaster guidelines to ensure that your site is not violating any of Google’s rules.

In Search Console’s Manual Actions report you’ll see a count of manual actions against your site at the top of the report. If your site has no manual actions then you will see a green check mark instead.

If all of your issues have been fixed, or if you believe the manual action was made in error then you can “Request a Review.” The request may take several days, so be patient when waiting for a response. According to Google’s guidance your request should:

- Explain the exact quality issue on your site.

- Describe the steps you’ve taken to fix the issue.

- Document the outcome of your efforts.

Check Your Mobile Friendliness

Google announced its Mobile-First Indexing in November of 2016 and later in March of 2018 they announced that the entirety of the web would be moved to mobile first indexing by the end of 2020. This new strategy means that a well-designed mobile version of your site is important for long-term and continued SEO relevance.

Google claims that mobile sites do not have a ranking advantage over non-mobile sites, but specifically that the quality of mobile-sites will affect ranking in mobile search results. If you have a mobile version of your site (which you definitely should!) then include it in your technical SEO site audit checklist to make sure it’s performing well.

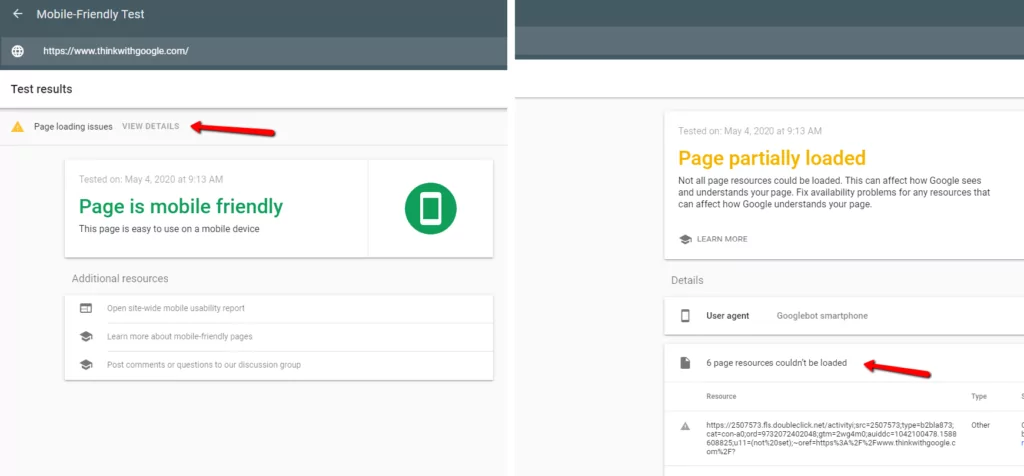

Mobile friendliness is very multi-faceted and includes a wide range of variables (too many to completely go over here) but the Mobile-Friendly Test tool from Google is the simplest and one of the best ways of determining how Google perceives the mobile version of a site. If there are any “Page Loading Issues” make note of them in your SEO site audit checklist.

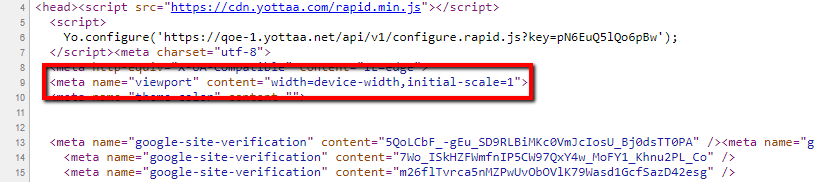

A simple way to check is to change the size of your web browser window to check for reflexive formatting (a viewport tag) that automatically adjusts when being resized. Reflexive formatting is one of the more fundamental elements of mobile friendliness, you can also look for the <meta name=”viewport”> HTML element in your source code.

Use the Mobile Friendly Test Tool

Here is another checklist item for auditing technical SEO on mobile, one of many tools Google provides marketers to audit their technical set-up, but this one is designed specifically for mobile.

Make sure your mobile site’s text is easy to read and see. There’s no official standard for this, but text that is too small or too close together can hurt you. Visually inspect your site on a mobile device or by using the Mobile Friendly Test Tool.

If for some reason the tool cannot load the page then it could indicate that the Googlebot smartphone version can’t even load your page. Retry with another URL, check on an actual mobile device, or move on to the next step in our technical SEO audit checklist guide.

Next, if the test shows “unloadable resources” it means that external elements of the page like images, CSS files, or scripts may not be loading properly. As part of your technical SEO audit you’ll want to make sure these resources are in the correct file location, loading quickly enough (not timing out), hidden behind log-ins, or blocked to Googlebot by your robots.txt file.

Checking Mobile in Search Console

Finally, if you want to confirm mobile friendliness as part of your technical SEO audit then you can also use Search Console. Go to Search Console and from there find the same data in Enhancements > Mobile Usability to look for warnings.

Search Console supplies direct information for how your site is performing in Google’s index. The Mobile Usability report will tell you if Googlebot has found any issues with the site’s mobile design and it can give you clues on whether mobile issues are hindering traffic.

Here the report will give you information about your pages, showing:

- Status: A page has two possible states:

- Error: The page is not mobile friendly. This could mean any number of issues are effecting the mobile friendliness of your site to a level that Google has deemed too extreme.

- Valid: The page is mobile friendly. This means it meets the minimum requirement for mobile friendliness.

- Pages: The count of pages in Error state with this issue.

Common issues reported in Search Console include lack of viewport coding, content that’s too wide to fit the screen, text that’s too small to read, or clickable elements that are too close together. Each of these should be something to watch out for as part of your website technical auditing checklist.

The advantage of Search Console is that it allows you to audit your site’s mobile friendliness as a whole – showing you information for any and all pages with mobile issues.

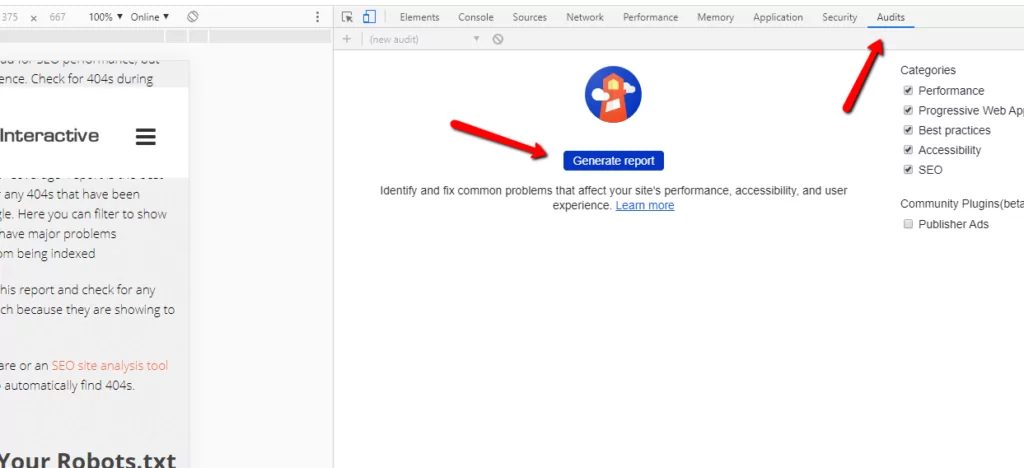

Checking Mobile Using Google’s Lighthouse

Google’s Lighthouse browser plugin/app can be used to verify aspects of mobile friendliness. With the plugin installed, select “Options” and make sure the “SEO” box is checked, then click “Generate Report.” Once the report is generated, verify that under Passed Audits > Mobile Friendly the report reads: “Has a <meta name=”viewport”> tag with width or initial-scale.”

The Lighthouse tool emulates a smartphone using cellular data to load your page – this way it actually simulates how your site will load over a slow and unreliable mobile connection. The tool will look at important metrics like “First meaningful paint” (how long it takes for the first important content to appear) or “First interactive” (how long it takes before people can actually interact with the page) – these metrics that are hugely important to good user experience.

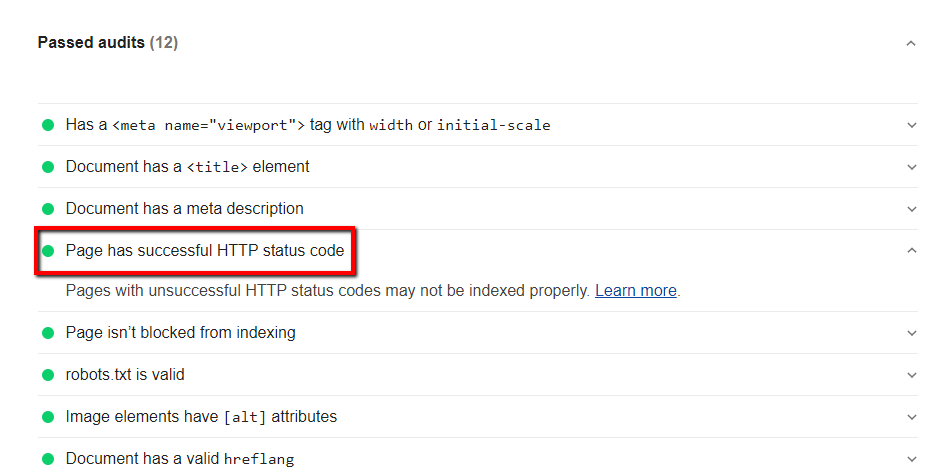

The Lighthouse report will share lots of the same information that you can find using the Mobile Friendly Test tool, or Search Console. Even if the information is redundant, it can still be useful to confirm your suspicions by verifying information about your site’s viewport coding, canonical status, hreflang tags, HTTP status code, <title> tag presence, and more.

Check for Coverage/Indexing Issues

Your SEO audit checklist should make sure that your site isn’t being hindered by any issues that could cause it to not be indexed.

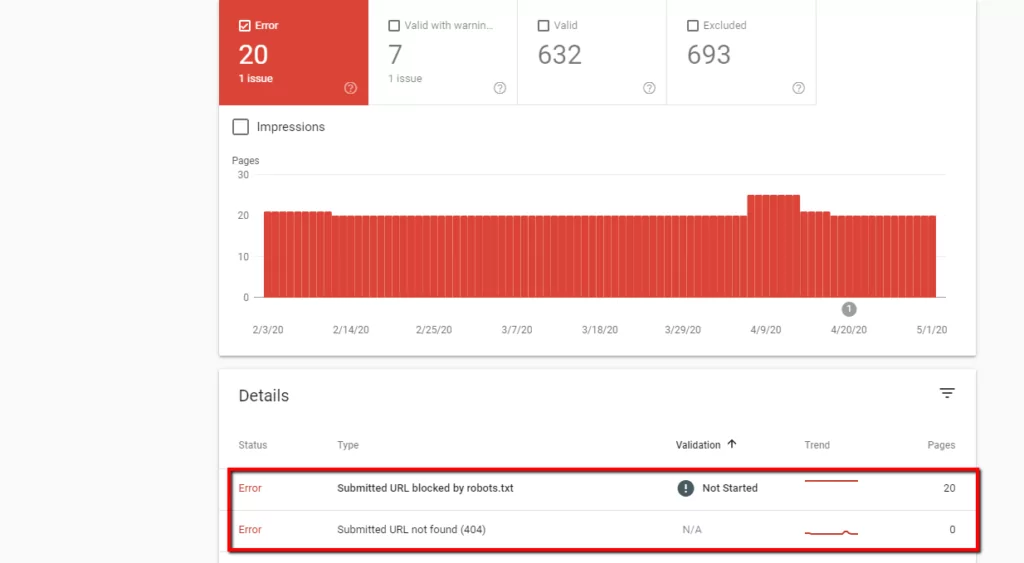

In Search Console, select Coverage from the left side menu. Make a note of any extreme or recent drops in the number of total “Valid” pages. Your technical SEO audit checklist should also note of any spike or large increase of listed “Errors” or pages that are “Valid with warnings”.

List “not found” pages, soft 404s, server errors, or any other issues mentioned by Search Console along with the total number of pages with each issue – these should be priority when you set out to fix your site’s SEO issues.

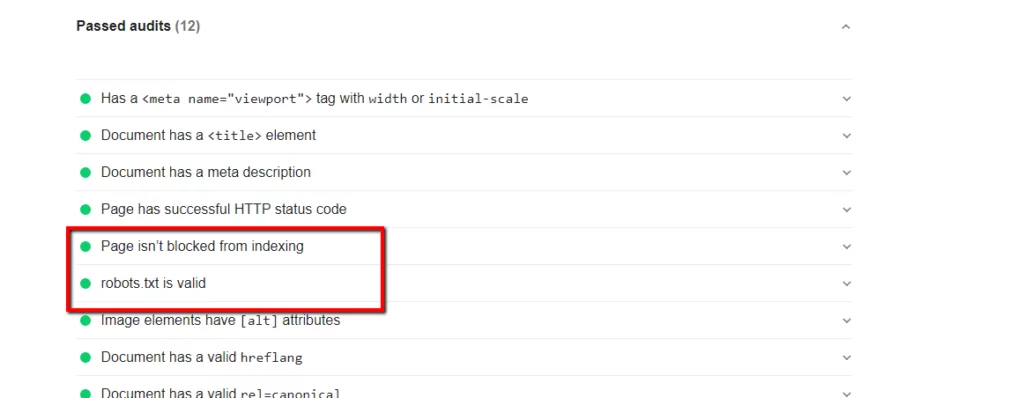

Google’s Lighthouse browser plugin/app can also be used to audit indexing. With the plugin installed, select “Options” and make sure the “SEO” box is checked, then click “Generate Report.” Once the report is generated, verify that under SEO > Passed Audits > Crawling and Indexing the report reads: “Page isn’t blocked from indexing” and “robots.txt is valid.”

Large drops in the number of indexed pages might indicate problems with robots.txt or crawlability. They might also be a symptom of other issues such as 404s, or improper redirects.

Scanning your Site for 404s

At this point it’s also a good idea to check your site for 404s. Broken links, dead pages, and status 404 URLs are not only bad for SEO performance, but bad for user experience. Check for 404s during your technical SEO audit checklist by scanning your site for 404s or using some of the free tools available to you.

The Search Console “Coverage” report is the best location to check or any 404s that have been discovered by Google. Here you can filter to show “Errors” pages that have major problems preventing them from being indexed.

Scroll down below this report and check for any that are listed as such because they are showing to Googlebot as 404.

You might also choose to use software or an SEO site analysis tool to crawl your site to automatically find 404s.

Auditing Your Robots.txt File

Many SEOs are aware that the robots.txt file on your site is to regulate permissions for robots visiting your site, including Googlebots and Bingbots. This means, by blocking bots from certain sections of your site, you can make sure that only pages you want to be indexed are getting added to search engines.

The first step for your technical SEO audit is to make sure your robots.txt file is in the correct location. The URI should always be “/robots.txt” and it should always be attached directly to the main domain so that bots can always find it in the same spot (e.g. www.example.com/robots.txt).

The most basic format of your file will look like:

User-agent: *

Disallow: /

The “user-agent” section will show any bots that you want to block, and the “disallow” section will show which parts of your site you want to block them from. As part of your technical SEO audit checklist, our guide suggests that if you do have any of the Google bots listed here you make sure that you are not inadvertently blocking them from important directories.

The “*” wildcard here is used to denote all bots, which means that in affect these commands are for any bots at all that visit the site.

With the command “Disallow: /” example, any area of your site that comes after the “/” from your main domain will be blocked (in affect the whole site!), but in the above example, since no bots are listed then it is safe.

The most common sections to block include:

Disallow: /cart

Disallow: /login

Disallow: /logout

Disallow: /register

Disallow: /account

When checking the status of your technical SEO health, then make sure that important sub-directories and areas of your site aren’t being excluded to Googlebot or Bingbot. You should also make sure that important theme files are not being blocked either, as this can negatively affect the ability of search engine bots to render your pages correctly.

Finally, your technical SEO website audit checklist should include making sure that you are not blocking other Google bots as well. Unless you have a specific reason to prevent them from looking at certain areas of your site.

These bots include:

- AdSense

- AdsBot Mobile Web Android (checks Android web page ad quality)

- AdsBot Mobile Web (checks iPhone web page ad quality)

- AdsBot (checks desktop ad page quality)

- Googlebot News

- Googlebot Image

- Googlebot Video

- Googlebot (Smartphone)

- Google Favicon (retrieves favicons for various services)

- AdIdxBot (for checking Bing Ads)

- BingPreview (used to generate page snapshots)

Using the Site Command

Indexing status can also be checked via a simple site command. In the Google search bar type the top level domain of the live site after the “site:” command (e.g. “site:example.com” – without any quotes).

Make note of the reported number of results at the top of the search engine results page.

Performing a site command in Google allows you to determine that the site is being indexed properly as well as the approximate number of pages being indexed. This is a rudimentary method of checking index status and isn’t 100% accurate – but it can be a simple step in your site audit checklist to make sure your pages are actually appearing in search.

The results from a site command are sometimes approximations based on how Google returns results from its index, they shouldn’t be treated as an exact number. However if the number is extremely low it may represent an issue with your indexing – if the number is too high it could mean an issue with your canonicalization is allowing duplicate URLs to be indexed.

Check that Your Sitemap is Visible

The next step in our technical SEO audit guide is to make sure you have your sitemap set-up and submitted properly. Though sitemaps are not a requirement (you can still get indexed without them) – for faster SEO results and regular indexing it’s highly advised.

A sitemap is a file where you provide information about the pages and files on a site along with the relationship between them. This file acts as a list of pages which can be crawled by Google to more easily index a site.

Most of the time you check for your sitemap at the “/sitemap.xml” URI from your main domain – but it’s okay to have your sitemap located at another URI – as long as Search Engines can find it.

For your technical SEO, the only way to know for certain is to just submit it directly to Google.

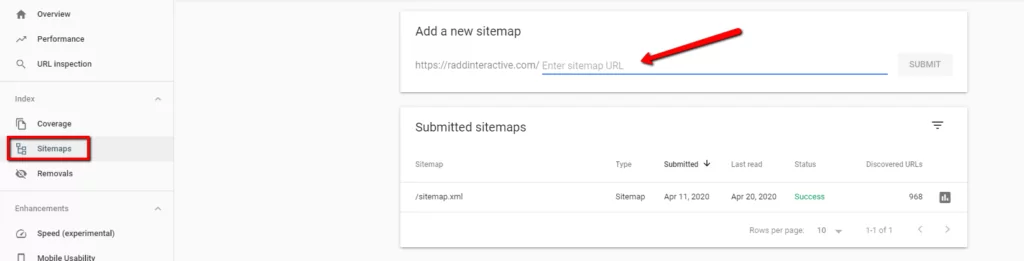

In Search Console, select Sitemaps from the left side menu. Make note of whether or not a sitemap has been submitted and check the date of submission. As part of your technical SEO auditing checklist it’s a good idea to make sure that your sitemap is up-to-date and is updated automatically (most content management systems can do this).

Make sure that your sitemap only includes status code 200 URLs – there should be no redirects, 404s, or non-canonical URLs (separate URLs for the same pages).

PageSpeed Insights for Both Mobile & Desktop

For both desktop and mobile search rankings Google has announced that page speed is a ranking factor. Page speed also has the potential to poorly affect user experience, increase exits, and increase bounce rate. For this section of your technical SEO audit checklist you may want to consider the speed of both your desktop and mobile site versions.

Go to the Google PageSpeed Insights tool and enter your site’s URL (this is yet another free technical SEO auditing tool from Google).

Make a note of speed and optimization for both the desktop and mobile version of your site and update your checklist with any issues.

Secure Protocols & Mixed Content

HTTPS is a ranking signal. By default, your website should be HTTPS if such a version exists. If a non-secure version of the site exists and has not been redirected, then in affect two versions of the site exist. Canonicalization is one solution, but a 301 redirect is the best way of informing search engines about the new version of a site.

For many webmasters not familiar with technical SEO this may not seem like an issue. For anyone going through a checklist of their site’s technical SEO then it’s a good idea to verify. There are a few ways to get a sense of your site’s security:

- Check the site’s homepage, category pages, and product pages for HTTPS. Check for multiple versions of the site (by substituting https://www, https://, https://, https://www to make sure that each one is redirected to one main HTTPS version)

- Method 2: Google’s Lighthouse browser plugin can be used to verify HTTPS status. With the plugin installed, select “Options” and make sure the “Best Practices” box is checked, then click “Generate Report.” Once the report is generated, verify that under Best Practices > Passed Audits the report reads: “Uses HTTPS.” Redirects will still have to be checked manually.

When redirecting multiple protocols & subdomains best practice for SEO is to use permanent (301 style) redirects. Non-permanent (302 style) redirects can confuse search engines and may result in loss of authority.

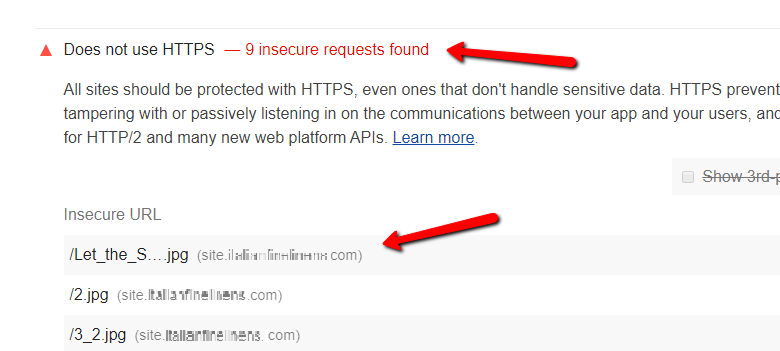

Auditing for Mixed Content

Having your domain set up with an HTTPS protocol is important for site security, but you should ensure that page resources, documents, and theme files are also secure – otherwise you may have “mixed content.”

For your technical SEO site audit it’s best to use Google Lighthouse.

You can use the free browser extension for Lighthouse, but it is also installed within the Chrome browser itself. Run Lighthouse on your site to check for any mixed content (on the page right-click and select “Inspect”, on the following menu go to “Audits” and then click on “Generate Report.”)

The tool will list page elements that are called via an insecure URL, giving you the opportunity to add them to your full SEO audit checklist.

Check for Suspicious Backlinks

Too many unnatural or spammy links can be an indicator to Google that a site does not have quality content or is not endorsed by credible websites. Malicious or spammy backlinks can hurt your site’s rankings and being aware of them can allow you to “disavow” unwanted links if necessary.

It’s rarely necessary to disavow links, but a prudent step in your technical SEO audit checklist is to at least be aware of any shady sites linking back to you and to see what sort of link neighborhood you’re in.

Go to Search Console, select Links in the left side menu and then select “More” under “Top Linking Sites” scan for sites that seem like spam or with domain names that contain explicit/adult subject matter.

A high number of backlinks from sites that are not related to your site could also be bad for SEO, just keep in mind that Google’s algorithm can catch spammy links and devalue them – reducing their harm to your site. If you include this step in your technical SEO site audit checklist then keep in mind that disavowing links without understanding their effect on your site could potentially cause you to lose rankings.

In 2012 Google’s Penguin algorithm update hugely changed the way that the search engine handled backlinks, especially spammy link building practices. It also meant that the algorithm became much more sophisticated in devaluing spammy links automatically.

If your complete SEO audit reveals links that you know are bad such as “PPC” links (backlinks related to pills, porn, or casinos) then you can use the disavow tool in Search Console to tell Google that you do not vouch for those links.

Disavowing links is definitely an advanced step and is rarely recommended – but if bad backlink building practices or black-hat SEO lead to a Manual Action then it may be worthwhile while to check your links as part of your technical SEO audit checklist.

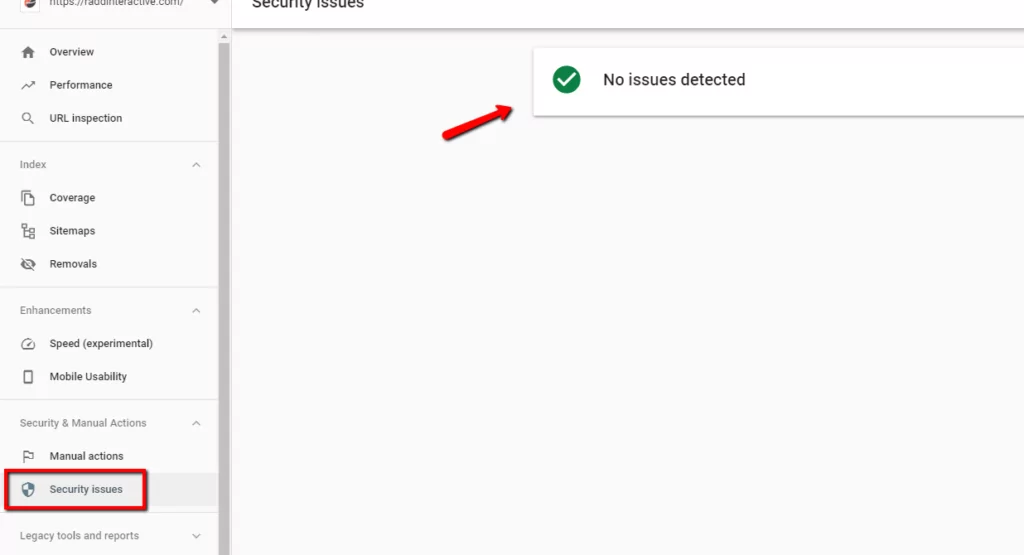

Security Issues

This section of Search Console provides warnings about potential security issues that can include malware, deceptive pages, harmful downloads, and uncommon downloads. These warnings are located in the “Security Issues” report and are an important element to check as part of any complete technical SEO audit checklist. If your site has security issues that Google can detect, then it can severely hurt your SEO performance.

Go to Search Console, select “Security Issues” on the left side and make note of any warnings. Check for any of these messages in your Search Console when going through this step of the website technical audit checklist:

Security issues fall into the following categories:

- Hacked content: Content or code placed onto your site by hackers using a security vulnerability.

- Malware and unwanted software: Google defines this as “software that is designed to harm a device or its users, that engages in deceptive or unexpected practices, or that negatively affects the user.”

- Social engineering: This means any content that tricks visitors into doing something dangerous, including downloading deceptive software or something that jeopardizes their private information.

Make note of any issues you find here and immediately begin working to resolve them. If you have a site developer than you should have them review your site for any relevant areas that could be causing security issues and fix them using common security best practices.

Once this step is complete you can request a review in the same area of your Search Console account, and Google will verify that the issue has been fixed.

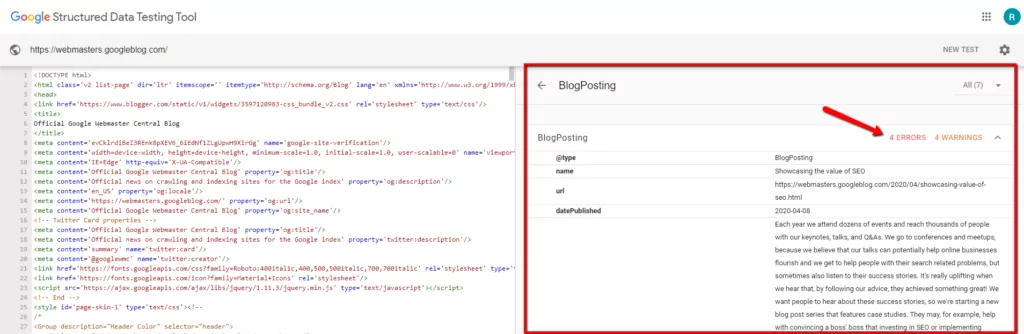

Checking Schema Markup

Finally you’ll also want to check your structured data (if you have any) to make sure that it is working properly. To be clear, structured data (also called schema) is not an SEO ranking factor. You do not need structured data on your site to rank, and in many cases it’s not necessarily useful. But structured data can improve how you appear in search results and can have a secondary benefit of improving click-through-rate to your site (CTR).

Google offers the Structured Data Testing Tool to webmasters precisely for checking their structured data. You can submit one of your site’s URLs into the tool to see if there are any issues that could prevent your schema from being used in search results.

The Structured Data Testing Tools also let you generate a sample view of a result from your data. While using the tool, click “validate” and afterward check for the “Preview” button to see if your schema markup is displaying properly.

Checking Schema Markup in Search Console

Finally, you can also look for warnings from Google about your structured data using reports in Search Console. Visit the “Enhancements” section of Search Console including the “Unparsable structured data” report where you can receive information about your schema or any unreadable code.

If you do not see this report available in your Search Console portal it means that you do not have any issues.

Get More Information

Once you have completed your technical SEO audit checklist you can begin to improve your site’s SEO health and performance. Good SEO performance is a complex and time-consuming task in many cases, but following best practices means that you are much better suited for organic performance in the long run.

Contact us for information on professional technical SEO services for auditing and for resources that can improve your website’s performance.